The enterprise security landscape is undergoing a profound shift driven by a new dual-use AI breakthrough. With the rollout of Anthropic’s Claude Mythos Preview under the gated defense framework of Project Glasswing, the cybersecurity community has witnessed a massive leap in capability. Mythos has proven to be an extraordinary asset for defensive engineering, autonomously identifying over 10,000 critical software vulnerabilities across the world’s most systemically important infrastructure in a matter of weeks. Launch partners like Cloudflare and Mozilla reported bug-finding efficiencies scaling by more than 10 times compared to previous cycles.

However, the very properties that make Claude Mythos a defensive triumph — autonomous reasoning, multi-step exploit construction, and deep context analysis — also represent the next generation of risk if such frontier capabilities are used by unauthorized or malicious actors.

An autonomous exploit operates at machine speed:

T+0 minutes: AI agent discovers an AWS access key in a public repository

T+5 minutes: Validates credential, enumerates S3 buckets, identifies overly permissive IAM policies

T+12 minutes: Pivots to EC2 metadata service, extracts broader credentials

T+18 minutes: Locates database credentials, establishes persistence, begins exfiltration

Your security team's response time? Still awaiting human triage.

This isn't theoretical, it's the new baseline threat model. When an adversary can analyze codebases and chain zero day vulnerabilities at machine velocity, reactive security models break down.

In light of these new AI superpowers, it’s not surprising I get a lot of questions about how organizations can protect themselves with existing tools and capabilities. Hint: The answer to this question does not require inventing an entirely new cryptographic paradigm or throwing out your current security stack of “mere mortal” capabilities. What’s missing is the rigorous, automated enforcement of current security best practices. The foundational principles of zero trust, identity-based access, and continuous secret hygiene can scale to neutralize autonomous threat vectors.

Mythos’ superpowers: Scale, speed, and context

To secure an enterprise against autonomous security analysis tools, we need to map their tactical behavior. When used in an offensive or unauthorized capacity, models of this kind do not rely on some new superpower; they exploit traditional, human-engineered security oversights at an unprecedented scale.

Autonomous chain construction: Standard fuzzers identify isolated bugs. An advanced model like Mythos reasons across a broader code architecture, discovering how a minor memory corruption flaw can be chained with a local sandbox escape to engineer a functional remote code execution (RCE) pathway.

Context-driven lateral movement: Upon gaining initial access to an environment, an autonomous agent executes automated post-exploitation playbooks. It parses environment variables, local file systems, configuration files, and system memory to harvest credentials.

Compressed exploitation windows: The gap between initial breach, asset discovery, and lateral movement shrinks from days to minutes. Current telemetry indicates that active automated exploit scans now begin within 15 minutes of a vulnerability or credential disclosure online. Human-in-the-loop triage networks cannot manually patch code or rotate credentials fast enough to outpace a machine-driven loop.

The key realization for defenders is that an autonomous agent is ultimately bound by the context it can discover. If you enforce rigorous security hygiene and eliminate static targets, the agent is deprived of actionable data.

Why traditional defenses fail

Traditional security fails against AI exploits for three fundamental reasons:

Human-in-the-loop bottlenecks: Your incident response assumes human decision-making at each stage — triage, correlation, response, validation. Even world-class SOCs take hours. AI exploits complete their mission in minutes.

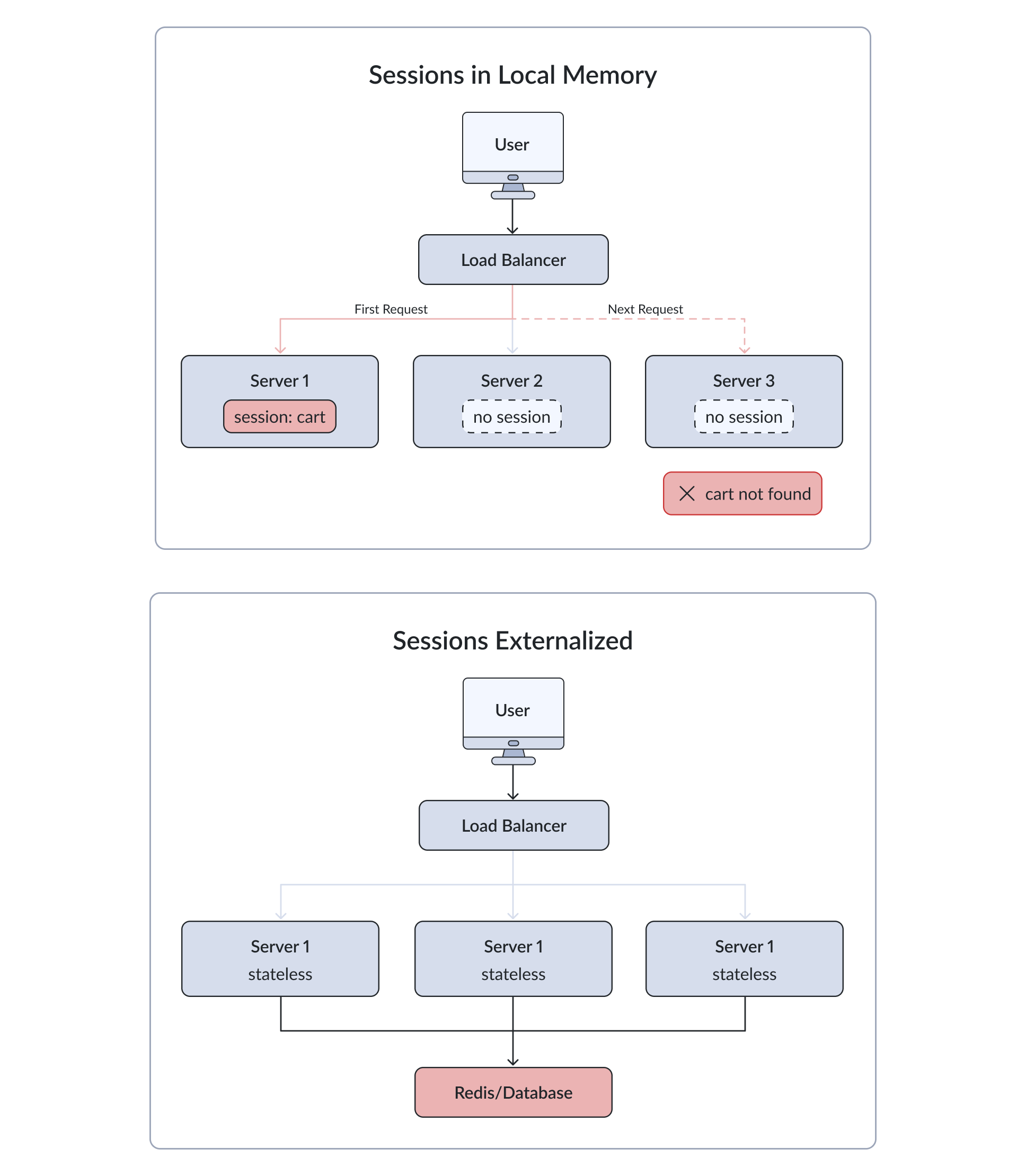

Static credential architecture: Long-lived credentials in environment variables, configuration files, and container secrets create persistent targets. AI agents don't crack encryption — they compromise systems with legitimate access.

Perimeter-based trust: Once inside your network, AI exploits leverage legitimate service-to-service communication and implicit trust relationships. Your firewall can't distinguish between authorized applications and autonomous agents operating with stolen credentials.

The key realization for defenders is that an autonomous agent is ultimately bound by the context it can discover. If you enforce rigorous security hygiene and eliminate static targets, the agent is deprived of actionable data.

The defense framework: Current best practices at machine speed

In light of these new AI capabilities, organizations frequently ask how they can protect themselves with existing tools. The answer doesn't require inventing an entirely new cryptographic paradigm or replacing your current security stack. What's missing is the rigorous, automated enforcement of security best practices. Many organizations have documented policies around least-privilege access and secure secret storage, but they're not being implemented in an automated, scalable way.

The foundational principles of zero trust, identity-based access, and continuous secret hygiene can scale to neutralize autonomous threat vectors — when properly automated.

By pairing IBM Vault Radar with IBM Vault, organizations can harden their infrastructure against AI exploits using the same sound architectural practices that security teams have been championing for years. The critical difference is actually implementing and automating these practices at the speed of autonomous threats.

The defense framework rests on three principles:

Principle 1: Eliminate static targets through continuous secret discovery and remediation

Principle 2: Assume breach, and limit blast radius via identity-based access and dynamic credentials

Principle 3: Automate at machine speed to match the velocity of autonomous exploits

Preemptive hygiene: Starving the context window with Vault Radar

An autonomous model's intelligence is directly tied to the information it consumes. If an agent gains access to internal version control histories containing historical credentials, hardcoded metadata, or clear-text architecture maps, it can map an optimized path for lateral movement. The most effective defense is keeping a pristine, unexposed codebase. Vault Radar automates this best practice at enterprise scale, continuously monitoring changes and updates across your environments to find hidden secrets or sensitive information that might have been accidentally shared.

Eliminating "zombie" secrets

Autonomous systems are highly efficient at digging through entire version control system (VCS) histories to find forgotten credentials buried in legacy commits. Vault Radar automates code hygiene by executing deep historical scans. Crucially, it avoids traditional pitfalls of false-positive alert fatigue by separating dead placeholder strings from active production keys with "activeness" checks and by supporting custom allow lists for known benign tokens. Vault Radar also performs entropy analysis to discover complex keys that can be missed by traditional pattern scanning.

To maintain strict data privacy, Vault Radar uses cryptographic hashing (Argon2id with HMAC) to track discovered secrets without storing them in plain text — ensuring your security tool doesn't become a target itself while providing exact locations for remediation.

IDE-level leak prevention

The legacy workflow of "leak first, rotate later" is entirely unviable against exploits running at machine-speed. Vault Radar implements shifting-left best practices by integrating directly into developer IDEs (such as VS Code) and gating GitHub pull requests. These built-in security capabilities also extend into the developer's IDE through IBM Concert Secure Coder , which leverages Vault Radar to detect and prioritize risks by business impact and generate automatic remediations as code is written, stopping vulnerabilities before they reach production. By blocking unmanaged tokens from ever hitting a remote repository, you eliminate the micro-windows of exposure that automated crawlers rely on.

PII and configuration scrubbing

Beyond cryptographic keys, autonomous agents leverage environmental context — such as exposed personally identifiable information (PII) or internal server names — to frame down-funnel attacks. Vault Radar's multi-layered engine flags exposed PII and configuration leaks, allowing teams to sanitize metadata.

Runtime resilience: Enforcing identity-based zero trust

While preemptive hygiene severely limits an agent's reconnaissance, a true defense-in-depth framework assumes that an application-layer vulnerability will eventually be compromised. When an autonomous exploit successfully establishes a foothold, Vault uses established zero trust practices to minimize the blast radius.

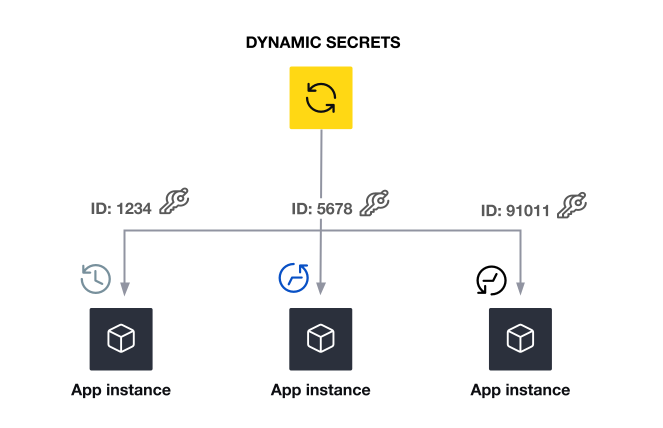

Dynamic secrets and just-in-time (JIT) credentialing

The most definitive method to neutralize an automated credential hunter is to ensure there are no static credentials on the file system to steal. Vault's dynamic secret engines generate scoped, ephemeral credentials across your entire infrastructure — AWS IAM, Azure Service Principals, GCP service accounts, databases (PostgreSQL, MongoDB, Oracle), PKI certificates, and SSH keys. No matter where an autonomous agent attempts to pivot, it encounters the same barrier: short-lived, context-aware credentials that expire before exploitation completes.

If an unauthorized agent triggers an RCE on a web server, it will find zero static database passwords in .env or YAML configurations. By the time the model processes the local file system and prepares its secondary lateral pivot, the temporary credential used by the legitimate application pool has likely expired or can be programmatically rotated.

Cryptographic identity over network trust

Autonomous agents are experts at navigating network topology, seeking out unauthenticated lateral routes between subnets or permissive internal security groups. Vault neutralizes this advantage by shifting the security boundary entirely from network topology to cryptographic identity.

Applications must authenticate to Vault using verifiable tokens (such as OIDC, JWT, AWS IAM roles, or Kubernetes service accounts). Even if an agent maps an open network route to a sensitive internal database API, it cannot extract operational secrets from Vault without presenting a valid, signed identity token. Vault administrators can also enforce strict behavioral isolation policies, locking secret access to explicit CIDR blocks or tight temporal windows, making it impossible for external agents to reuse stolen machine contexts out-of-bounds.

Automated lifecycle management

Defending at machine velocity requires automated orchestration. Vault provides the mechanism to execute response playbooks programmatically:

Credential rotation: For legacy infrastructure where using true dynamic, short-lived credentials is not feasible, mitigating risk requires high-frequency rotation. Automated credential rotation in Vault Enterprise enables this process. This capability handles complex lifecycles (such as LDAP static roles) with centralized scheduling, intelligent retries with exponential backoff, and administrative pause/resume controls. By moving traditional, static accounts to automated, high-frequency rotation schedules, you drastically narrow the viability window of any intercepted credential.

Rapid global revocation: If an intrusion detection system (IDS) flags an active compromise on a workload, a single automated API call to Vault can immediately revoke every active lease tied to that workload's identity, instantly dropping the attacker’s authorization across multi-cloud environments

Secret remediation: Vault Radar provides a closed-loop approach to quickly remediate unsecure secrets when they are discovered. At discovery, teams get real-time alerts with contextual guidance and the ability to import discovered secrets directly into Vault for secure management, enabling actions like rotation and revocation to minimize risks associated with credential exposure.

Conclusion: The security bar has risen, but security best practices still apply

The emergence of frontier models like Claude Mythos marks an inflection point: Software analysis velocity has accelerated exponentially, but the defense blueprint remains unchanged. What's different is the margin for error. Quarterly rotation cycles, manual remediation, and human-in-the-loop responses are no longer viable.

The solution doesn't require new security paradigms — it requires operationalizing and automating principles security experts have championed for years. Organizations must operate in continuous discovery and response mode, reducing exploitability through elimination of static secrets, limiting blast radius via identity-based access, and integrating security into every decision.

By deploying Vault Radar for continuous monitoring and Vault for dynamic, identity-based authorization, you create an environment devoid of the static targets autonomous exploits require. The foundational security practices you implement today ensure your architecture remains resilient, regardless of how rapidly threats evolve.

Ready to bring machine-speed security to your infrastructure?

Explore the Vault Radar Quickstart tutorial to start scanning your repositories, auditing local environments, and neutralizing "zombie" credentials before they can be exploited. to start scanning your repositories, auditing local environments, and neutralizing "zombie" credentials before they can be exploited.

To eliminate static targets at runtime, check out the Vault Documentation to learn more about setting up dynamic, just-in-time secrets engines and configuring automated credential rotations.

from HashiCorp Blog https://ift.tt/MvItk0H

via IFTTT